This is a short write-up about why you should consider a certain network topology when adopting scale-out storage technologies in a multi-rack environment. Without going into too much detail, I want to accentuate the need to follow the scalable distributed storage model when it comes to designing your Ethernet storage network. To be honest, it is probably the other way around. The networking experts in this world introduced scalable network architectures while maintaining consistent and predictable latency, for a long time now. The storage world is just catching up.

Today, we can create highly scalable distributed storage infrastructures, following Hyper-Converged Infrastructures (HCI) innovations. Because the storage layer is distributed across ESXi hosts, a lot of point-to-point Ethernet connections between ESXi hosts will be utilized for storage I/O’s. Typically, when a distributed storage solution (like VMware vSAN) is adopted, we tend to create a pretty basic layer-2 network. Preferably using 10GbE or more NICs, line-rate capable components in a non-blocking network architecture with enough ports to support our current hosts. However once we scale to an extensive number of ESXi hosts and racks, we face challenges on how to facilitate the required network interfaces to connect to our ESXi hosts and how to connect the multiple Top of Rack (ToR) switches to each other. That is where the so-called spine and leaf network architecture comes into play.

Spine-Leaf

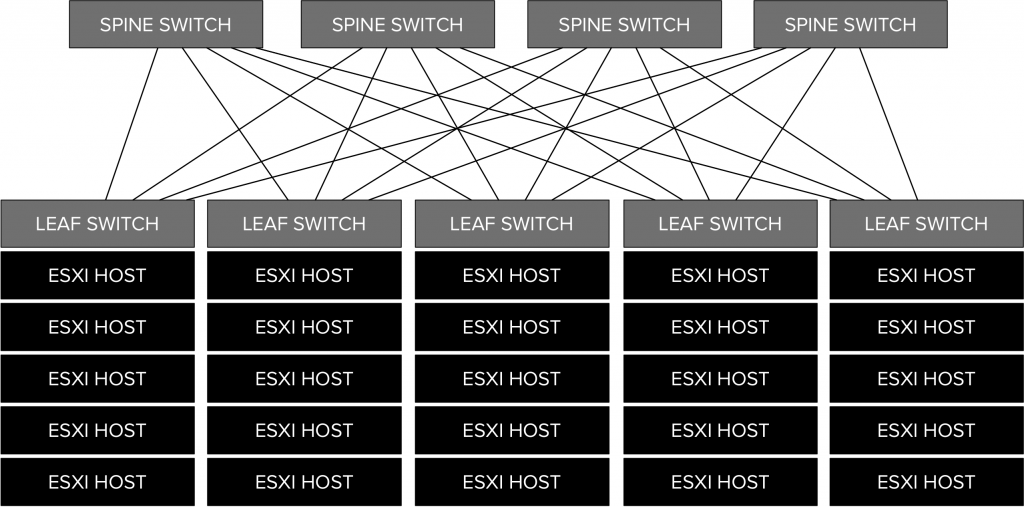

Each leaf switch, in a spine-leaf network architecture, connects to every spine switch in the fabric. Using this topology, the connection between two ESXi hosts will always traverse the same number of network hops when the hosts are distributed across multiple racks. Such a network topology provides a predictable latency, thus consistent performance, even though you keep scaling out your virtual data center. It is the consistency in performance that makes the spine/leaf network architecture so suitable for distributed storage solutions.

An exemplary logical spine-leaf network architecture is shown in the following diagram:

A spine-leaf topology can be a layer-2 or layer-3 network. The key is when it is designed as a layer-2 network, to create a loop-free fabric. Also, you might want to consider bandwidth requirements. When using VMware vSAN, the bandwidth utilization between the ESXi hosts depends strongly on the fault tolerance methods as defined in the VM Storage Policies and the configured fault domains. Depending on these factors, data is written and/or read from a certain number of ESXi hosts.

It can be a challenging exercise to predict bandwidth utilization between ESXi hosts when using a distributed storage platform. That is why it could be a good idea to thoroughly think about your VM storage policy designs together with the storage I/O characteristics of your workloads. Network architectures like a spine/leaf network topology are not only recommended for distributed storage related services, but are applicable for each large multi rack environment. It really is a perfect fit for every distributed service solution out there if deployed in a multi rack environment, like the distributed network services as provided by VMware NSX.

Make sure that when you are in the process of designing a scalable datacenter using distributed services, to design your network accordingly. It could make sense to introduce a spine/leaf network from starters if you anticipate substantial future growth for your virtual datacenter.