New in vSphere 7 Update 2 is that vMotion is able to fully benefit from high-speed bandwidth NICs for even faster live migrations by auto-tuning vMotion streams. Manual tuning is no longer required as it was in previous vSphere versions, to achieve the same.

vMotion Stream Architecture

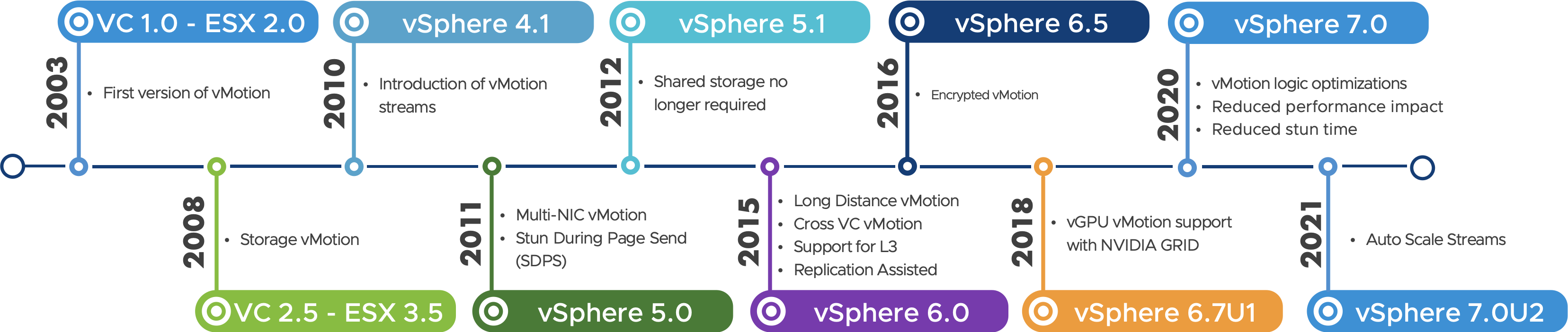

vSphere vMotion, in versions before vSphere 7 Update 2, does not saturate high bandwidth NICs out-of-the-box like 25, 40, and 100 GbE. It required tuning at multiple levels such as vMotion streams, the TCP/IP VMkernel interfaces, and potentially even the NIC driver. That’s because, by default, vMotion would use a single stream for handling the vMotion process. Click here to learn more about the vMotion Stream Architecture.

One stream consists of a competition helper, a crypto helper, and a stream helper. One helper equals one CPU core. Typically, one stream helper is capable of saturating ±15 GbE of bandwidth. This is great for 10 GbE NICs, but it becomes a limiting factor when using 25 GbE or higher NICs. With 25, 40, and even 100 GbE becoming mainstream quickly, vMotion needs to be able to utilize these bandwidths automatically for more efficient live migration operations.

In previous vSphere versions, we provided ways to instantiate more streams to utilize more CPU power to use more network bandwidth for vMotion. Mainly by configuring advanced settings. That resulted in faster live-migrations as more bandwidth is provided to vMotion to replicate memory pages.

Note: make sure that if you used the manual advanced settings before, that you revert to the default settings to ensure you’ll benefit from the Auto Stream capability in vSphere 7 Update 2.

Automatically Adjust for High Bandwidth

With vSphere 7 Update 2, the vMotion process automatically spins up the number of streams according to the bandwidth of the physical NICs used for the vMotion network(s). This is now an out-of-the-box setting. The usable bandwidth for vMotion is determined by querying the underlying NICs.

This means that all VMkernel interfaces enabled for vMotion are checked, and the underlying physical NIC bandwidths. Depending on the outcome, a number of streams is instantiated. The baseline is 1 vMotion stream per 15 GbE of bandwidth. This results in the following number of streams per VMkernel interface;

- 25 GbE = 2 vMotion streams

- 40 GbE = 3 vMotion streams

- 100 GbE = 4* vMotion streams

*4 is the max nr of vMotion streams that make sense because of a limit of 4 RX queues. If you need to go beyond 4 vMotion streams to fully utilize 100 GbE bandwidth for vMotion, you could still consider the manual tuning options, or increase the RX queues for the VMkernel interface. Be ware of the caveat that a VMkernel needs to be re-created after increasing the number of RX queues before it takes effect.

To Conclude

vSphere vMotion keeps on getting better and better. The beauty of the updates is that you’ll benefit from the improvements automatically by installing, or upgrading to, vSphere 7 Update 2. Be sure to check out this page for more details about vMotion, including all the updates since vSphere 7.

–originally authored and posted by me at https://core.vmware.com/blog/faster-vmotion-makes-balancing-workloads-invisible–